Amazon Web Services disclosed its intention to invest $100 million in a AWS Generative AI Innovation Center. The program aims to connect AWS AI and machine learning (ML) experts with customers around the globe to help them envision, design, and launch new generative AI products, services, and processes.

“Amazon has more than 25 years of AI experience, and more than 100,000 customers have used AWS AI and ML services to address some of their biggest opportunities and challenges. Now, customers around the globe are hungry for guidance about how to get started quickly and securely with generative AI,” said Matt Garman, senior vice president of Sales, Marketing, and Global Services at AWS. “The Generative AI Innovation Center is part of our goal to help every organization leverage AI by providing flexible and cost-effective generative AI services for the enterprise, alongside our team of generative AI experts to take advantage of all this new technology has to offer. Together with our global community of partners, we’re working with business leaders across every industry to help them maximize the impact of generative AI in their organizations, creating value for their customers, employees, and bottom line.”

The center will offer workshops, engagements, and training. Customers will work closely with generative AI experts from AWS and the AWS Partner Network to select the right models, define paths to navigate technical or business challenges, develop proofs of concepts, and make plans for launching solutions at scale. The Generative AI Innovation Center team will provide guidance on best practices for applying generative AI responsibly and optimizing machine learning operations to reduce costs.

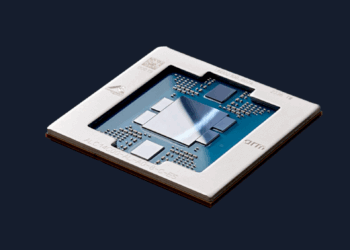

Engagements will deliver strategy, tools, and assistance that will help customers use AWS generative AI services, including Amazon CodeWhisperer, an AI-powered coding companion, and Amazon Bedrock, a fully managed service that makes foundational models (FMs) from AI21 Labs, Anthropic, and Stability AI, along with Amazon’s own family of FMs, Amazon Titan, accessible via an API. They can also train and run their models using high-performance infrastructure, including AWS Inferentia-powered Amazon EC2 Inf1 Instances, AWS Trainium-powered Amazon EC2 Trn1 Instances, and Amazon EC2 P5 instances powered by NVIDIA H100 Tensor Core GPUs. Additionally, customers can build, train, and deploy their own models with Amazon SageMaker or use Amazon SageMaker Jumpstart to deploy some of today’s most popular FMs, including Cohere’s large language models, Technology Innovation Institute’s Falcon 40B, and Hugging Face’s BLOOM.