NVIDIA launched its H200 Tensor Core GPU based on its Hopper architecture and designed with advanced memory to handle massive amounts of data for generative AI and high performance computing workloads.

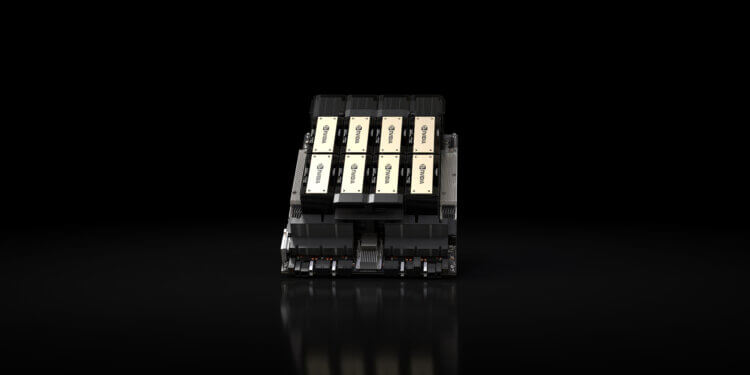

The NVIDIA H200 is the first GPU to offer HBM3e — faster, larger memory to fuel the acceleration of generative AI and large language models, while advancing scientific computing for HPC workloads. With HBM3e, the NVIDIA H200 delivers 141GB of memory at 4.8 terabytes per second, nearly double the capacity and 2.4x more bandwidth compared with its predecessor, the NVIDIA A100.

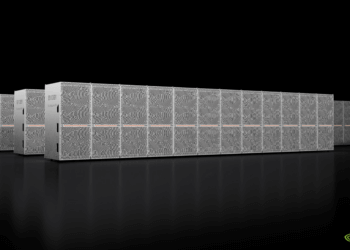

H200-powered systems from the world’s leading server manufacturers and cloud service providers are expected to begin shipping in the second quarter of 2024.

With HBM3e, the H200 delivers 141 GB of memory at 4.8 terabytes per second, nearly doubling the capacity and providing 2.4 times more bandwidth compared to its predecessor, the NVIDIA A100.

NVIDIA H200 will be available in NVIDIA HGX H200 server boards with four- and eight-way configurations, which are compatible with both the hardware and software of HGX H100 systems.

H200-powered systems from leading server manufacturers and cloud service providers are anticipated to hit the market in the second quarter of 2024.

NVIDIA says the introduction of H200 will lead to further performance leaps, including nearly doubling inference speed on Llama 2, a 70 billion-parameter LLM, compared to the H100. Additional performance leadership and improvements with H200 are expected with future software updates. Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure will be among the first cloud service providers to deploy H200-based instances starting next year, in addition to CoreWeave, Lambda and Vultr.

“To create intelligence with generative AI and HPC applications, vast amounts of data must be efficiently processed at high speed using large, fast GPU memory,” said Ian Buck, vice president of hyperscale and HPC at NVIDIA. “With NVIDIA H200, the industry’s leading end-to-end AI supercomputing platform just got faster to solve some of the world’s most important challenges.”